In this tutorial, we build a hands-on comparison between a synchronous RPC-based system and an asynchronous event-driven architecture to understand how real distributed systems behave under load and failure. We simulate downstream services with variable latency, overload conditions, and transient errors, and then drive both architectures using bursty traffic patterns. By observing metrics such as tail latency, retries, failures, and dead-letter queues, we examine how tight RPC coupling amplifies failures and how asynchronous event-driven designs trade immediate consistency for resilience. Throughout the tutorial, we focus on practical mechanisms, retries, exponential backoff, circuit breakers, bulkheads, and queues that engineers use to control cascading failures in production systems. Check out the FULL CODES here.

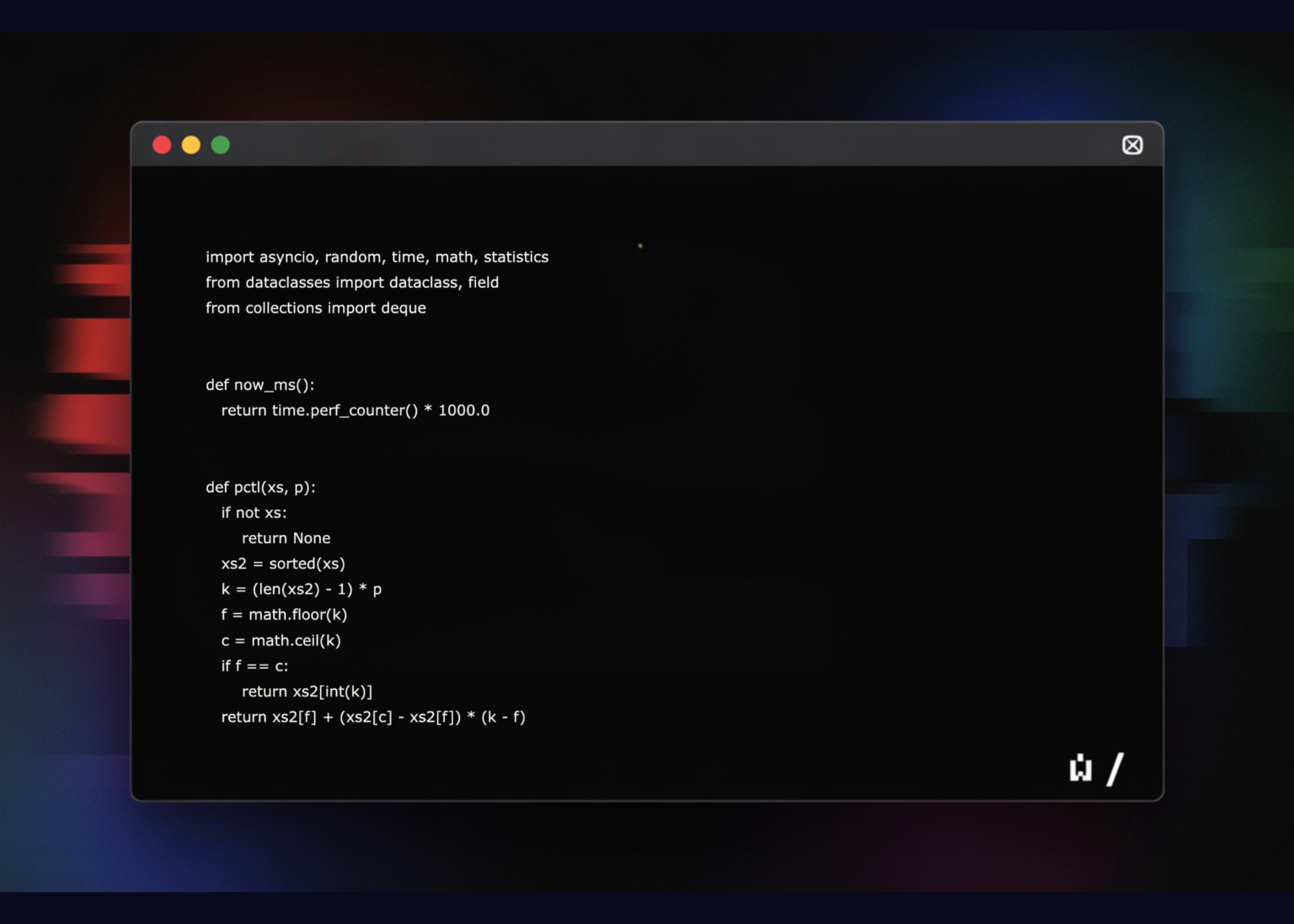

from dataclasses import dataclass, field

from collections import deque

def now_ms():

return time.perf_counter() * 1000.0

def pctl(xs, p):

if not xs:

return None

xs2 = sorted(xs)

k = (len(xs2) – 1) * p

f = math.floor(k)

c = math.ceil(k)

if f == c:

return xs2[int(k)]

return xs2[f] + (xs2[c] – xs2[f]) * (k – f)

@dataclass

class Stats:

latencies_ms: list = field(default_factory=list)

ok: int = 0

fail: int = 0

dropped: int = 0

retries: int = 0

timeouts: int = 0

cb_open: int = 0

dlq: int = 0

def summary(self, name):

l = self.latencies_ms

return {

“name”: name,

“ok”: self.ok,

“fail”: self.fail,

“dropped”: self.dropped,

“retries”: self.retries,

“timeouts”: self.timeouts,

“cb_open”: self.cb_open,

“dlq”: self.dlq,

“lat_p50_ms”: round(pctl(l, 0.50), 2) if l else None,

“lat_p95_ms”: round(pctl(l, 0.95), 2) if l else None,

“lat_p99_ms”: round(pctl(l, 0.99), 2) if l else None,

“lat_mean_ms”: round(statistics.mean(l), 2) if l else None,

}

We define the core utilities and data structures used throughout the tutorial. We establish timing helpers, percentile calculations, and a unified metrics container to track latency, retries, failures, and tail behavior. It gives us a consistent way to measure and compare RPC and event-driven executions. Check out the FULL CODES here.

class FailureModel:

base_latency_ms: float = 8.0

jitter_ms: float = 6.0

fail_prob: float = 0.05

overload_fail_prob: float = 0.40

overload_latency_ms: float = 50.0

def sample(self, load_factor: float):

base = self.base_latency_ms + random.random() * self.jitter_ms

if load_factor > 1.0:

base += (load_factor – 1.0) * self.overload_latency_ms

fail_p = min(0.95, self.fail_prob + (load_factor – 1.0) * self.overload_fail_prob)

else:

fail_p = self.fail_prob

return base, (random.random() < fail_p)

class CircuitBreaker:

def __init__(self, fail_threshold=8, window=20, open_ms=500):

self.fail_threshold = fail_threshold

self.window = window

self.open_ms = open_ms

self.events = deque(maxlen=window)

self.open_until_ms = 0.0

def allow(self):

return now_ms() >= self.open_until_ms

def record(self, ok: bool):

self.events.append(not ok)

if len(self.events) >= self.window and sum(self.events) >= self.fail_threshold:

self.open_until_ms = now_ms() + self.open_ms

class Bulkhead:

def __init__(self, limit):

self.sem = asyncio.Semaphore(limit)

async def __aenter__(self):

await self.sem.acquire()

async def __aexit__(self, exc_type, exc, tb):

self.sem.release()

def exp_backoff(attempt, base_ms=20, cap_ms=400):

return random.random() * min(cap_ms, base_ms * (2 ** (attempt – 1)))

We model failure behavior and resilience primitives that shape system stability. We simulate overload-sensitive latency and failures, and we introduce circuit breakers, bulkheads, and exponential backoff to control cascading effects. These components let us experiment with safe versus unsafe distributed-system configurations. Check out the FULL CODES here.

def __init__(self, fm: FailureModel, capacity_rps=250):

self.fm = fm

self.capacity_rps = capacity_rps

self._inflight = 0

async def handle(self, payload: dict):

self._inflight += 1

try:

load_factor = max(0.5, self._inflight / (self.capacity_rps / 10))

lat, should_fail = self.fm.sample(load_factor)

await asyncio.sleep(lat / 1000.0)

if should_fail:

raise RuntimeError(“downstream_error”)

return {“status”: “ok”}

finally:

self._inflight -= 1

async def rpc_call(

svc,

req,

stats,

timeout_ms=120,

max_retries=0,

cb=None,

bulkhead=None,

):

t0 = now_ms()

if cb and not cb.allow():

stats.cb_open += 1

stats.fail += 1

return False

attempt = 0

while True:

attempt += 1

try:

if bulkhead:

async with bulkhead:

await asyncio.wait_for(svc.handle(req), timeout=timeout_ms / 1000.0)

else:

await asyncio.wait_for(svc.handle(req), timeout=timeout_ms / 1000.0)

stats.latencies_ms.append(now_ms() – t0)

stats.ok += 1

if cb: cb.record(True)

return True

except asyncio.TimeoutError:

stats.timeouts += 1

except Exception:

pass

stats.fail += 1

if cb: cb.record(False)

if attempt <= max_retries:

stats.retries += 1

await asyncio.sleep(exp_backoff(attempt) / 1000.0)

continue

return False

We implement the synchronous RPC path and its interaction with downstream services. We observe how timeouts, retries, and in-flight load directly affect latency and failure propagation. It also highlights how tight coupling in RPC can amplify transient issues under bursty traffic. Check out the FULL CODES here.

class Event:

id: int

tries: int = 0

class EventBus:

def __init__(self, max_queue=5000):

self.q = asyncio.Queue(maxsize=max_queue)

async def publish(self, e: Event):

try:

self.q.put_nowait(e)

return True

except asyncio.QueueFull:

return False

async def event_consumer(

bus,

svc,

stats,

stop,

max_retries=0,

dlq=None,

bulkhead=None,

timeout_ms=200,

):

while not stop.is_set() or not bus.q.empty():

try:

e = await asyncio.wait_for(bus.q.get(), timeout=0.2)

except asyncio.TimeoutError:

continue

t0 = now_ms()

e.tries += 1

try:

if bulkhead:

async with bulkhead:

await asyncio.wait_for(svc.handle({“id”: e.id}), timeout=timeout_ms / 1000.0)

else:

await asyncio.wait_for(svc.handle({“id”: e.id}), timeout=timeout_ms / 1000.0)

stats.ok += 1

stats.latencies_ms.append(now_ms() – t0)

except Exception:

stats.fail += 1

if e.tries <= max_retries:

stats.retries += 1

await asyncio.sleep(exp_backoff(e.tries) / 1000.0)

await bus.publish(e)

else:

stats.dlq += 1

if dlq is not None:

dlq.append(e)

finally:

bus.q.task_done()

We build the asynchronous event-driven pipeline using a queue and background consumers. We process events independently of request submission, apply retry logic, and route unrecoverable messages to a dead-letter queue. It demonstrates how decoupling improves resilience while introducing new operational considerations. Check out the FULL CODES here.

reqs = []

rid = 0

while rid < total:

n = min(burst, total – rid)

for _ in range(n):

reqs.append(rid)

rid += 1

await asyncio.sleep(gap_ms / 1000.0)

return reqs

async def main():

random.seed(7)

fm = FailureModel()

svc = DownstreamService(fm)

ids = await generate_requests()

rpc_stats = Stats()

cb = CircuitBreaker()

bulk = Bulkhead(40)

await asyncio.gather(*[

rpc_call(svc, {“id”: i}, rpc_stats, max_retries=3, cb=cb, bulkhead=bulk)

for i in ids

])

bus = EventBus()

ev_stats = Stats()

stop = asyncio.Event()

dlq = []

consumers = [

asyncio.create_task(event_consumer(bus, svc, ev_stats, stop, max_retries=3, dlq=dlq))

for _ in range(16)

]

for i in ids:

await bus.publish(Event(i))

await bus.q.join()

stop.set()

for c in consumers:

c.cancel()

print(rpc_stats.summary(“RPC”))

print(ev_stats.summary(“EventDriven”))

print(“DLQ size:”, len(dlq))

await main()

We drive both architectures with bursty workloads and orchestrate the full experiment. We collect metrics, cleanly terminate consumers, and compare outcomes across RPC and event-driven executions. The final step ties together latency, throughput, and failure behavior into a coherent system-level comparison.

In conclusion, we clearly saw the trade-offs between RPC and event-driven architectures in distributed systems. We observed that RPC offers lower latency when dependencies are healthy but becomes fragile under saturation, where retries and timeouts quickly cascade into system-wide failures. In contrast, the event-driven approach decouples producers from consumers, absorbs bursts through buffering, and localizes failures, but requires careful handling of retries, backpressure, and dead-letter queues to avoid hidden overload and unbounded queues. Through this tutorial, we demonstrated that resilience in distributed systems does not come from choosing a single architecture, but from combining the right communication model with disciplined failure-handling patterns and capacity-aware design.

Check out the FULL CODES here. Also, feel free to follow us on Twitter and don’t forget to join our 100k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you can join us on telegram as well.

Michal Sutter is a data science professional with a Master of Science in Data Science from the University of Padova. With a solid foundation in statistical analysis, machine learning, and data engineering, Michal excels at transforming complex datasets into actionable insights.